The Sync Engine From Hell

Google Calendar has a dirty secret. If you create events through an unverified OAuth app, Google Workspace silently strips your metadata.

No error. No warning. No field in the response telling you "hey, we deleted half your event." It just vanishes — summary, description, extendedProperties — all gone. The event exists, but it's a hollow shell. A ghost with a time slot and nothing else.

I found this out at 5:34 AM on a Saturday, staring at 300+ orphaned "(No title)" events scattered across four calendars.

This is the story of how I built a sync engine, watched it eat itself, and rebuilt it in a single day.

What BusyGuard Does

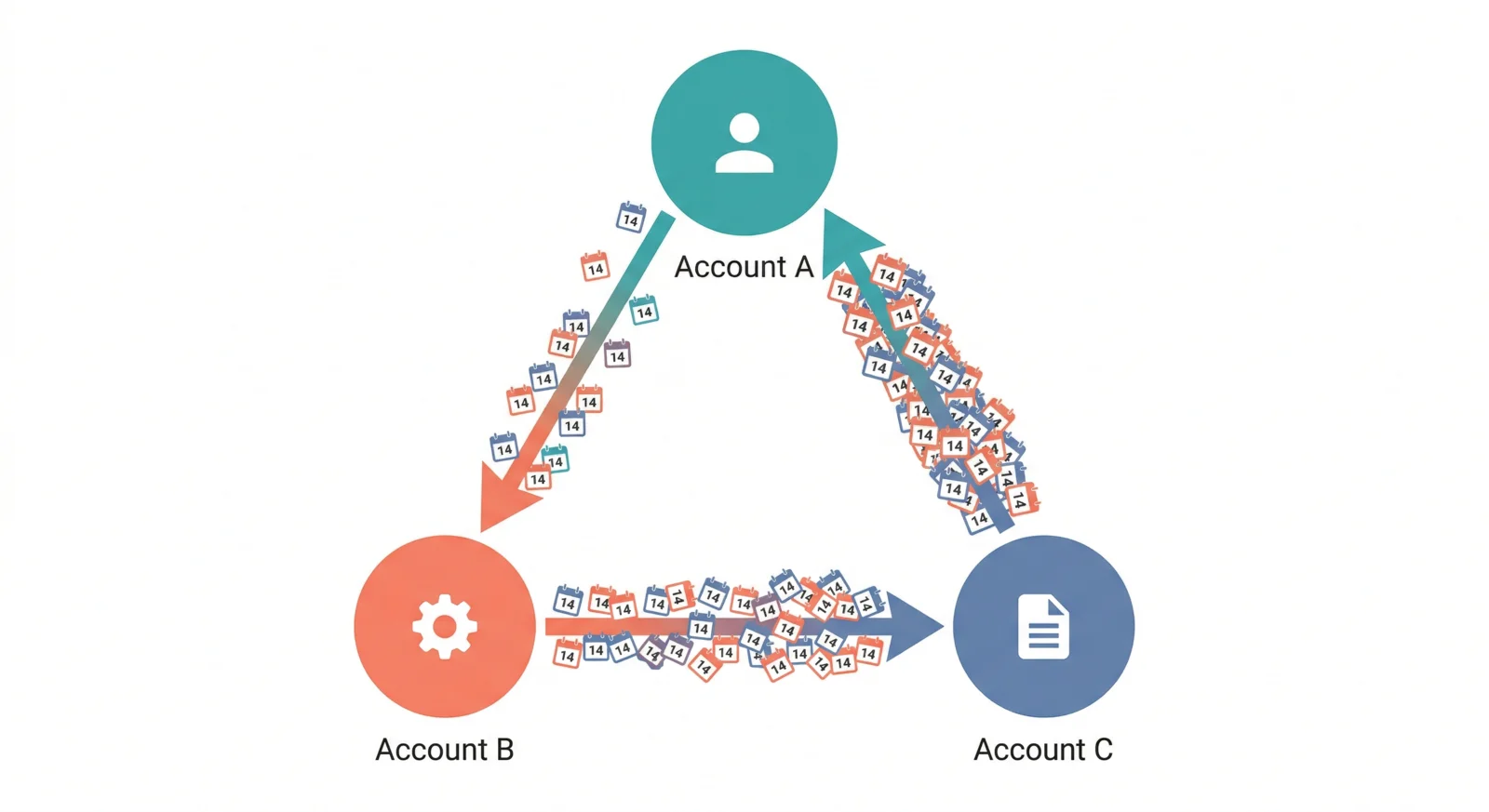

The idea is simple. If you have multiple Google accounts — personal, work, freelance — your calendars don't talk to each other. You end up double-booked because your work calendar can't see your personal dentist appointment.

BusyGuard connects your accounts and creates "Busy" blocks across them. Dentist at 3 PM on your personal calendar? A busy block appears on your work calendar. Meeting at 10 AM on work? Your personal calendar knows.

The concept is dead simple. The implementation is a minefield.

Day 1: It Works (March 20)

I scaffolded the whole thing in an afternoon. Next.js, Supabase, Google Calendar API. OAuth flow, webhook registration, sync engine. By 6 PM, it was deployed on Vercel and syncing events between two accounts.

Four commits. Everything green.

That was the last time everything was green for a while.

Day 2: The First Blood (March 21)

The moment I connected a third account, events started doubling. The sync engine would see a "Busy" event it had just created on Account B, interpret it as a real event, and create another "Busy" block for it on Account C. Account C's new block would then propagate back to Account A. Classic infinite loop.

My first fix was straightforward — check if an event was created by BusyGuard before propagating it. I tagged every created event with extendedProperties.private.busyguard = "managed" and filtered those out during sync.

function isManagedEvent(event: GoogleEvent): boolean {

return event.extendedProperties?.private?.busyguard === 'managed';

}

Clean. Elegant. Completely useless — but I didn't know that yet.

I also added a unique constraint on the database: one busy block per source event per target calendar. Belt and suspenders. If the filter missed something, the DB would catch the duplicate on insert.

Deployed. Tested. Worked perfectly on my personal Gmail accounts.

Then I connected a Google Workspace account.

Day 2.5: The Escalation (March 22, 5 AM)

I woke up to a calendar that looked like it had been carpet-bombed. Hundreds of "Busy" and "(No title)" events, layered on top of each other, spanning two weeks. The sync engine had been running in a loop all night, each webhook triggering another sync, each sync creating more events, each event triggering more webhooks.

Here's what happened: Google Workspace treats unverified OAuth apps differently from personal Gmail. When you events.insert with a summary, description, and extendedProperties, Workspace accepts the request — returns 200, gives you an event ID — and then quietly strips those fields. The event is created, but it's lobotomized.

My isManagedEvent() filter? It was checking extendedProperties.private.busyguard. That field didn't exist anymore. Every event BusyGuard created looked like a normal user event to the next sync cycle.

The unique constraint in the DB caught some duplicates. But events that were created for different source events — or events where the source had shifted by a few seconds due to timezone normalization — slipped through.

My first instinct was to patch the metadata back. If events.insert strips the fields, maybe events.patch can restore them:

// After insert, patch the fields back

await calendar.events.patch({

calendarId,

eventId: createdEvent.id,

requestBody: {

summary: 'Busy',

description: '[BusyGuard] Managed busy block',

extendedProperties: { private: { busyguard: 'managed' } }

}

});

Nope. Workspace strips those too on PATCH from unverified apps.

Tried events.update (PUT) with the full event body. Same result for extendedProperties, though summary and description survived on PUT but not on insert. Inconsistent behavior from the same API. Wonderful.

The Nuclear Option (March 22, 6 AM)

With events still multiplying, I did two things in rapid succession:

- Disabled the webhook handler entirely. Stop the bleeding.

- Built a "nuclear cleanup" endpoint that deleted every event with summary "Busy" or "(No title)" across all included calendars.

feat: add nuclear cleanup to delete all Busy and (No title) events

That's an actual commit message I wrote at 6:15 AM. Not my proudest moment, but it worked. Hundreds of phantom events, gone. A clean slate.

But cleanup isn't a fix. I needed the sync to actually work without metadata as a safety net.

The Rebuild (March 22, 9 AM - 12 PM)

Coffee. Deep breath. Architecture from scratch.

The core problem was clear: I couldn't trust Google to preserve any metadata on events created by my app. Every detection mechanism that relied on event content — extendedProperties, summary patterns, description markers — was unreliable at best and completely absent at worst.

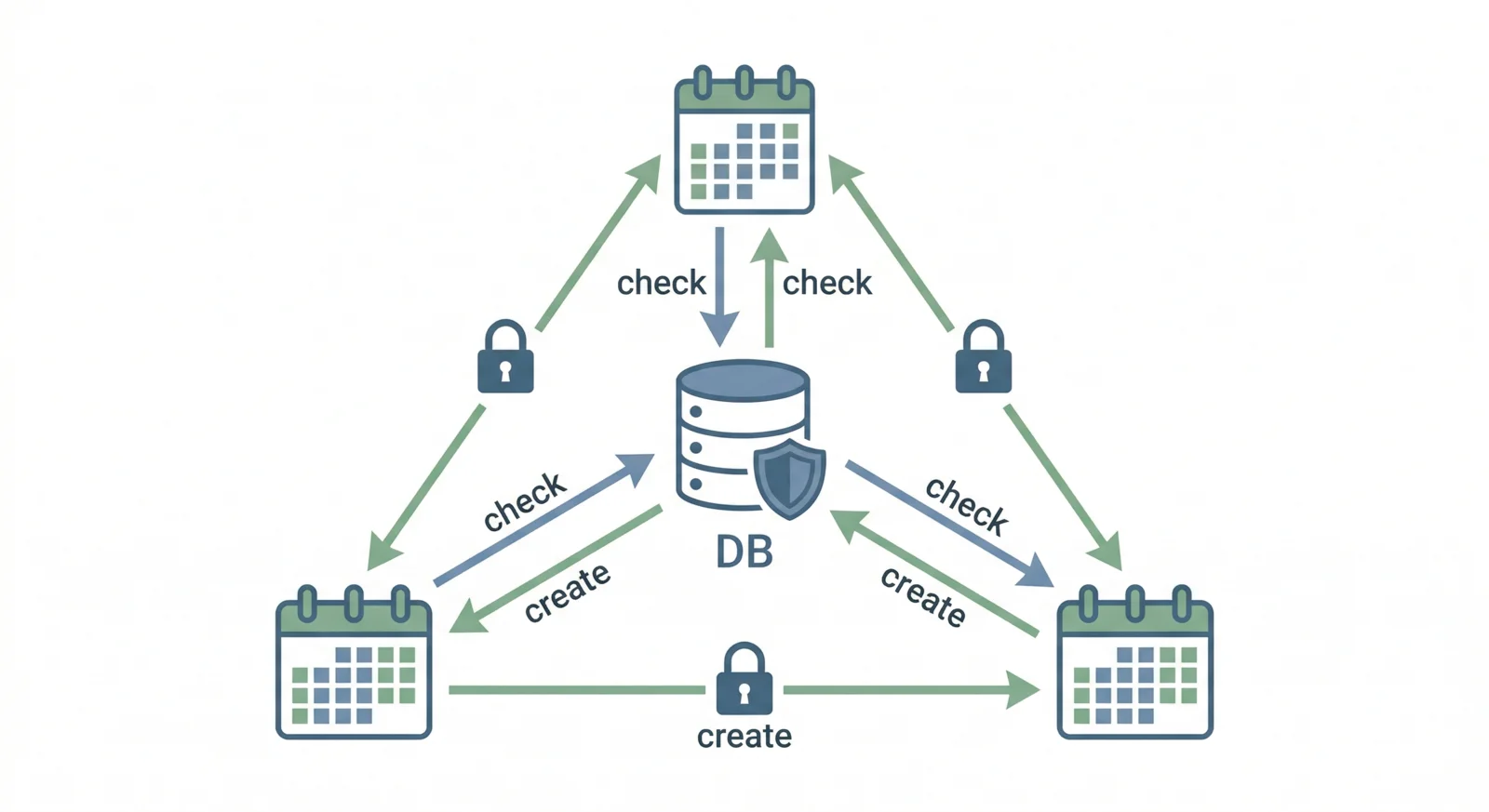

The only thing I could trust was my own database.

Here's the new architecture:

Layer 1: DB as sole source of truth. Every busy block BusyGuard creates gets a row in managed_busy_blocks with the Google event ID. During sync, before creating a new event, check if the source event already has a corresponding busy block in the DB. If the DB says "I already created a busy block for this event on that calendar," skip it. Don't even look at the Google event.

Layer 2: Cross-account primary-only targeting. Stop syncing between calendars on the same account. Only write busy blocks to the primary calendar of other accounts. This alone eliminated 67% of the sync pairs — from 12 to 4 in my test setup.

Layer 3: Per-user sync lock. One sync at a time, per user. Insert-based lock in Supabase with a 1-minute TTL. If a webhook fires while a sync is running, it gets a 409 and backs off. No more concurrent syncs fighting over the same events.

Layer 4: Webhook debounce. Even with the lock, rapid webhook fires were wasteful. Added a 30-second debounce using calendars.last_sync_at. If the last sync was less than 30 seconds ago, skip.

Layer 5: Reduced blast radius. Sync window from 14 days to 2 days (today + tomorrow). If something goes wrong, the damage is capped.

// The old way: trust Google metadata

if (isManagedEvent(event)) continue; // BROKEN

// The new way: trust the database

const existingBlock = await db

.from('managed_busy_blocks')

.select('id')

.eq('source_event_id', event.id)

.eq('target_calendar_id', targetCalendarId)

.single();

if (existingBlock.data) continue; // RELIABLE

I kept isManagedEvent() as a bonus layer for personal Gmail accounts where it actually works. But the system doesn't depend on it. Any single layer failing is survivable.

The Proof (March 22, 12:44 PM)

After deploying the rebuild, I wrote a test that simulated the exact scenario that broke everything:

test: prove sync engine is duplicate-proof across 3 layers

15 tests covering targeting (cross-account primary-only), loop

detection (managedEventIds), and DB idempotency (unique constraint).

Full simulation: 10 events across 4 calendars = 10 blocks, second

sync = zero growth.

Ten events across four calendars. First sync: 10 busy blocks created. Second sync: zero new blocks. Third sync: zero. The engine was idempotent.

Old architecture: 10 events, 4 calendars, 12 sync pairs. New architecture: same events, same calendars, 4 sync pairs. The targeting fix alone would have prevented most of the cascade.

The Lesson Nobody Tells You

Google Calendar's documentation doesn't mention that Workspace strips metadata from unverified apps. I found one Stack Overflow answer from 2019 buried in a thread about a different problem. One.

If you're building anything that creates events through the Google Calendar API and your app isn't verified — which it won't be during development, and might never be if it's an indie project — do not rely on extendedProperties. Do not rely on description. Do not rely on summary surviving the insert.

Use your own database as the source of truth. Tag events with an external ID you control. Verify everything after creation.

The Google Calendar API is a friendly handshake that pickpockets your metadata on the way out.

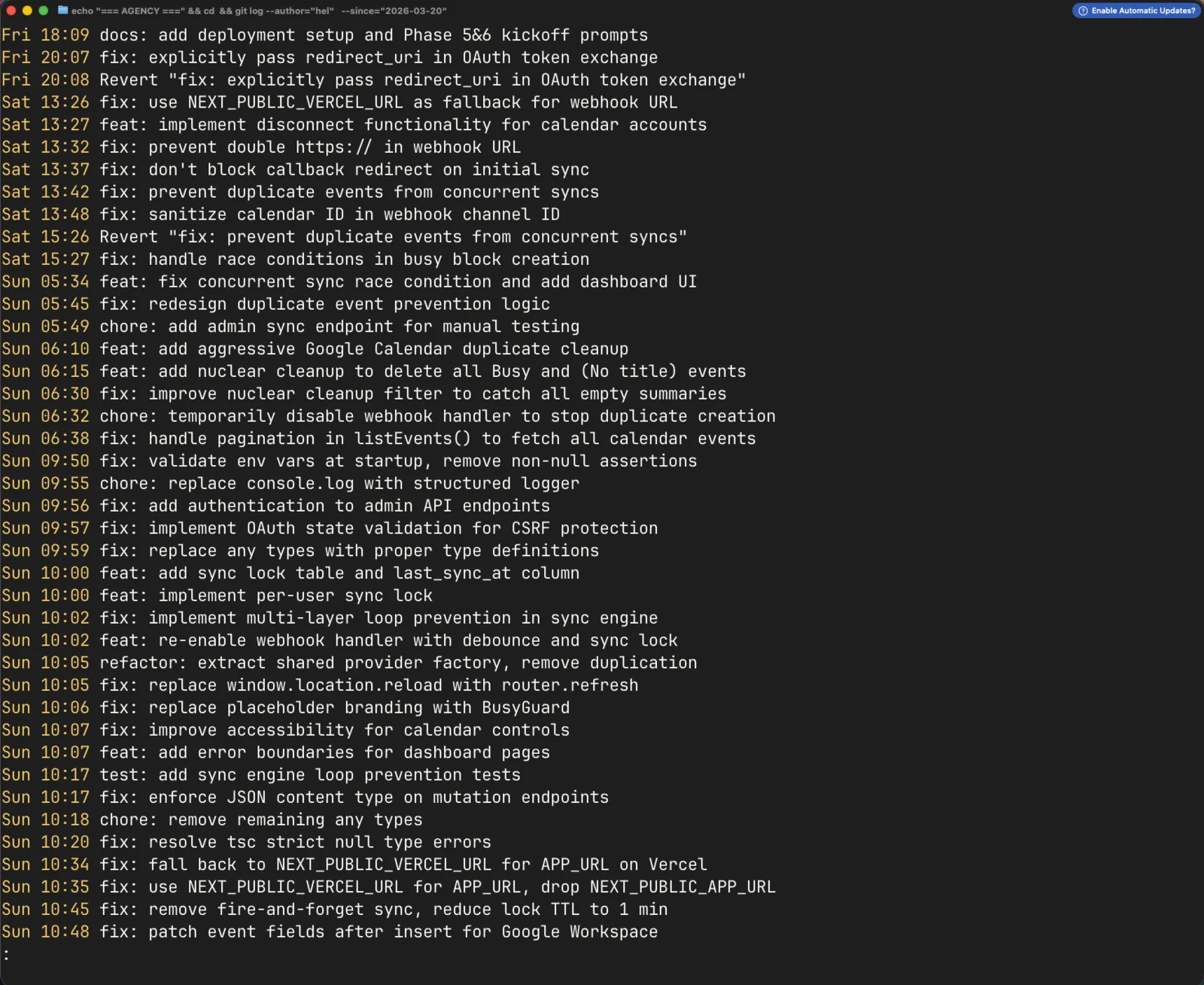

The Timeline

For the morbidly curious, here's how it unfolded in commits:

- 17:23 — Sync engine deployed. "It works!"

- 13:42 (next day) — First duplicate fix. Concurrent sync handling.

- 15:26 — Revert that fix. It didn't work.

- 15:27 — Different approach: catch unique constraint violations.

- 05:34 (day after) — Wake up. 300+ phantom events. Start escalating.

- 05:45 — Redesign duplicate prevention. DB as source of truth.

- 06:10 — Aggressive cleanup endpoint.

- 06:15 — Nuclear cleanup. Delete everything matching "Busy" or "(No title)".

- 06:32 — Disable webhooks entirely. Stop the bleeding.

- 06:38 — Discover listEvents() wasn't paginating. Fix it.

- 09:50 — Start the proper rebuild. Env validation, structured logging.

- 10:00 — Per-user sync lock.

- 10:02 — Multi-layer loop prevention.

- 10:02 — Re-enable webhooks with debounce.

- 11:32 — Rewrite loop detection, DB-only. The real fix.

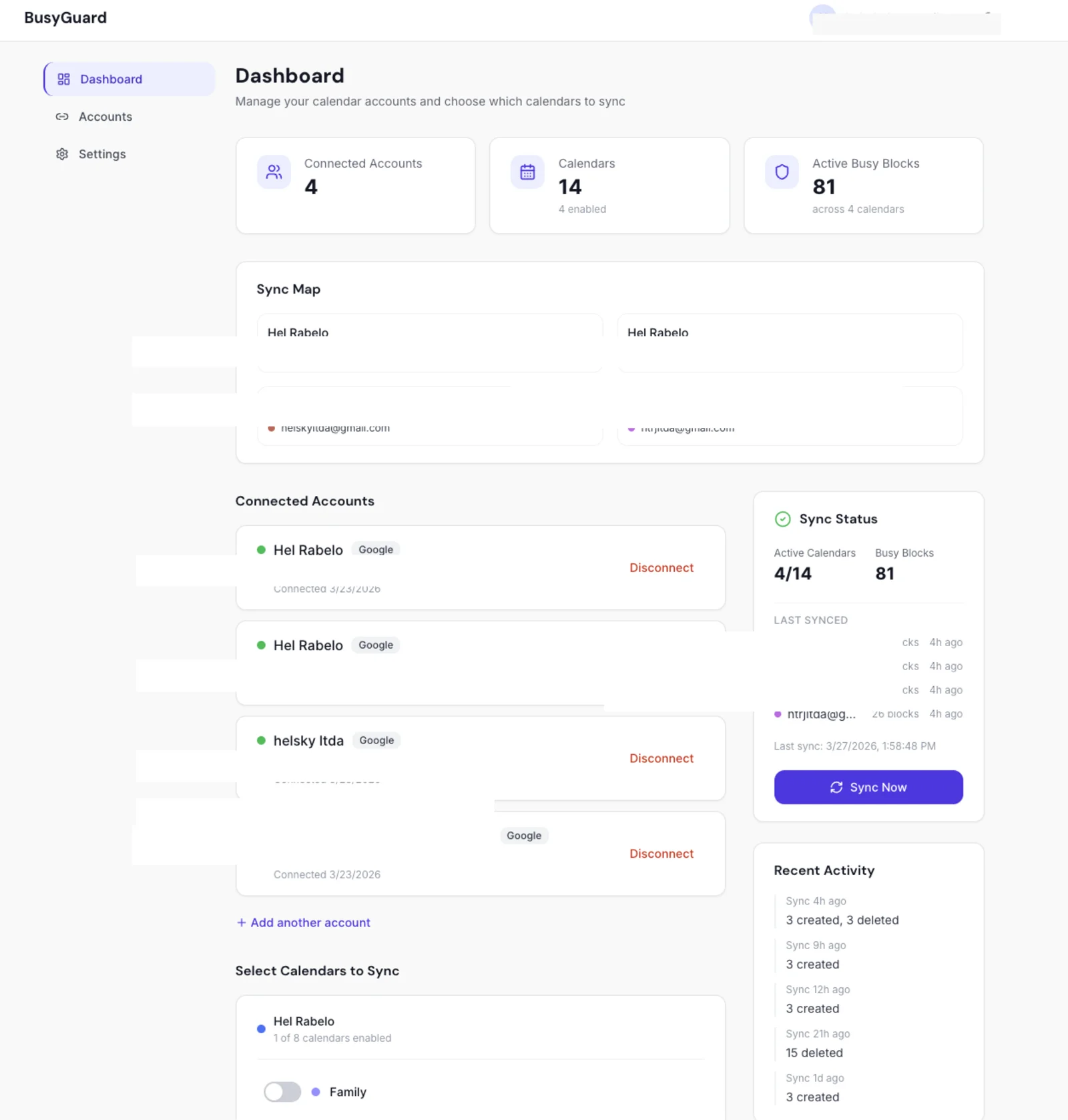

- 12:32 — Dashboard showing sync status and calendar controls.

- 12:44 — Tests proving the engine is duplicate-proof.

- 12:53 — 15 tests green. Done.

From nuclear option to proven fix in seven hours. From "disable everything" to "here's the test suite proving it works."

Why I'm Writing This

Because I searched for "Google Calendar sync engine duplicate events" and "extendedProperties stripped Workspace" and found almost nothing. The information that would have saved me a Saturday morning is scattered across buried Stack Overflow answers and closed GitHub issues.

If you're building a calendar sync tool, save yourself the debugging session:

- Never trust event metadata you didn't read back and verify. Google can and will strip fields silently.

- Use your database as the canonical record of what you created. External IDs, not content matching.

- Sync one direction at a time, one user at a time. Concurrent bidirectional sync is where dragons live.

- Reduce your blast radius. Sync 2 days, not 14. You can always expand later.

- Build a nuclear cleanup before you need one. You'll need one.

The sync engine works now. It's been running for five days without a single duplicate. But I'll never look at a "(No title)" calendar event the same way again.